3D Scene Querying Interface

Bachelor thesis at the EPFL Digital Humanities Lab — a natural language and semantic querying system over 3D point cloud data, with an interactive interface for spatial exploration and object retrieval.

Project Overview

Developed at the EPFL Digital Humanities Lab (DHLab), this bachelor thesis builds a system that allows users to query 3D scenes using natural language. Given a 3D point cloud or mesh — such as a scanned room, building, or outdoor environment — users can ask questions like "show me all chairs near the window" or "highlight objects above 1.5 meters" and the system returns the matching geometry, highlighted in the interactive 3D viewer.

The core challenge is bridging the gap between free-form text queries and the structured, geometric nature of 3D spatial data. The interface is designed to be accessible to non-technical domain experts (historians, architects, archaeologists) while being backed by a robust NLP-to-3D grounding pipeline.

Technical Architecture

- 3D data ingestion: Supports point clouds (.ply, .las), meshes, and RGBD frames. Preprocessing includes normals estimation, voxelization, and semantic pre-segmentation.

- Semantic grounding: Objects in the scene are detected and labeled using a 3D instance segmentation model (Mask3D / PointGroup variants). Each instance is assigned a semantic class and spatial attributes (position, bounding box, size).

- Query parsing: User queries are parsed via a fine-tuned LLM that extracts semantic constraints (object type, spatial relations, attributes) and maps them to scene graph queries.

- Scene graph: The 3D scene is represented as a graph where nodes = objects and edges = spatial relations (next to, above, inside, etc.). Queries are executed as graph traversals.

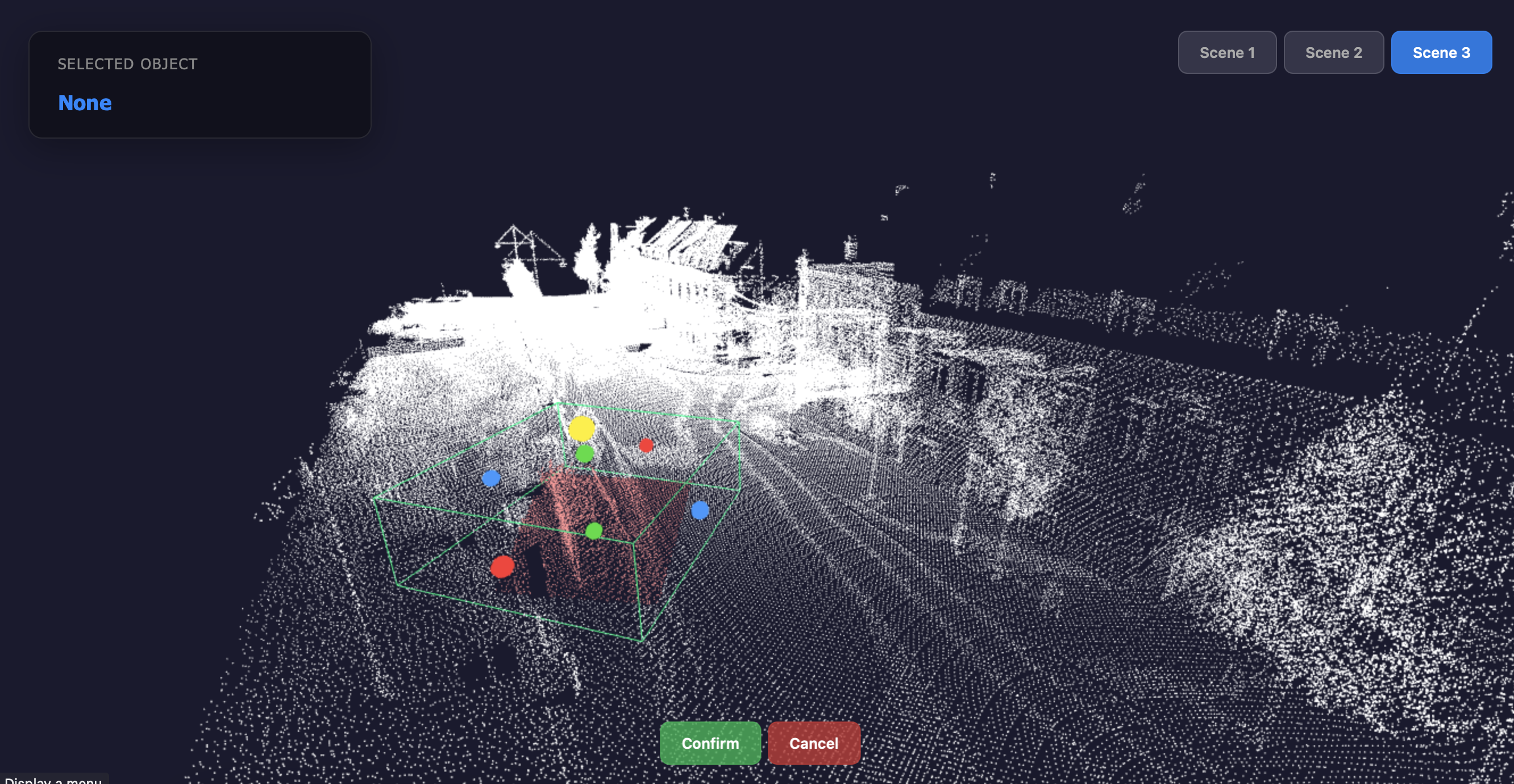

- Interactive viewer: A web-based 3D viewer (Three.js) renders the point cloud and highlights query results in real time, with orbit controls, layer toggling, and annotation tools.

"Spatial data should be as queryable as a database; the interface is what makes 3D accessible to everyone, not just 3D experts."

Interface & UX

The interface is split into three panels: a query bar at the top for natural language input, a 3D viewport center for scene exploration (pan, orbit, zoom, selection), and a results panel on the right that lists matched objects with their attributes (semantic class, coordinates, dimensions, confidence score).

Users can also draw spatial selection boxes directly in the 3D viewport to filter results, combine free-text and structured attribute filters, and export selections as annotated point clouds or JSON metadata for downstream analysis.

Stack

- Backend: Python, FastAPI, Open3D, PyTorch (3D segmentation), LangChain (query parsing)

- Frontend: Three.js, TypeScript, React — real-time WebSocket updates for query results

- Data formats: PLY, LAS, OBJ, glTF — processed via a unified ingestion pipeline

- Models: Mask3D for instance segmentation, fine-tuned GPT-based extractor for query parsing